Why 86% of Agentic AI Pilots Never Make It to Production

The demo was flawless. The agent ingested three documents, cross-referenced a policy database, and surfaced a recommendation in under two minutes. The room was impressed. The board approved the production budget that quarter.

Eight months later, the system is still not live. The AI team says the integration spec was met. The platform team says the agent produces outputs the downstream systems can't handle. The CTO is writing a board update that contains the phrase "revised timeline" for the third time. Nobody in the room can explain, precisely, what is blocking deployment, only that it is blocked.

This is not an anecdote. This is the median outcome.

Deloitte's latest enterprise AI survey paints a picture that should make every pilot sponsor deeply uncomfortable: 30% of organisations are still exploring agentic AI options, 38% are running pilots, 14% describe themselves as deployment-ready, and just 11% have agentic systems actually operating in production. That means roughly one in nine organisations that started down this road have anything to show for it. BCG's numbers tell the same story from a different angle: only 26% of companies have moved beyond proof-of-concept to generate material value from AI. The other 74% are stuck somewhere between "it worked in the demo" and "we can't figure out why it doesn't work against real data."

Gartner reckons 30% of generative AI projects will be abandoned after the proof-of-concept stage by the end of 2025. Not paused. Not "deprioritised pending budget review." Abandoned.

So we keep funding pilots. We keep doing demos at all-hands meetings where everyone claps. And then six months later, the project quietly disappears from the quarterly roadmap and nobody really talks about it.

The question nobody seems to be asking is: why does the demo working make everything worse?

The demo working is actually the problem

Here's the thing about a successful pilot: it proves exactly one thing. That the AI logic works in isolation, against clean data, in a controlled environment, with someone nearby to catch mistakes. It proves nothing about what happens when that same logic is wired into fifteen years of inconsistent schemas, three CRM migrations, a document management system running on event-driven queues with variable latency, and a compliance framework that was designed for human decision-makers operating at human speed.

But that's not how it gets interpreted. A successful demo creates false confidence. It gets treated as proof that the system works. Period. Which means the remaining work gets scoped as a deployment exercise: "we just need to hook it up to the real systems and push it to production." Six weeks, maybe eight. Add a couple of engineers.

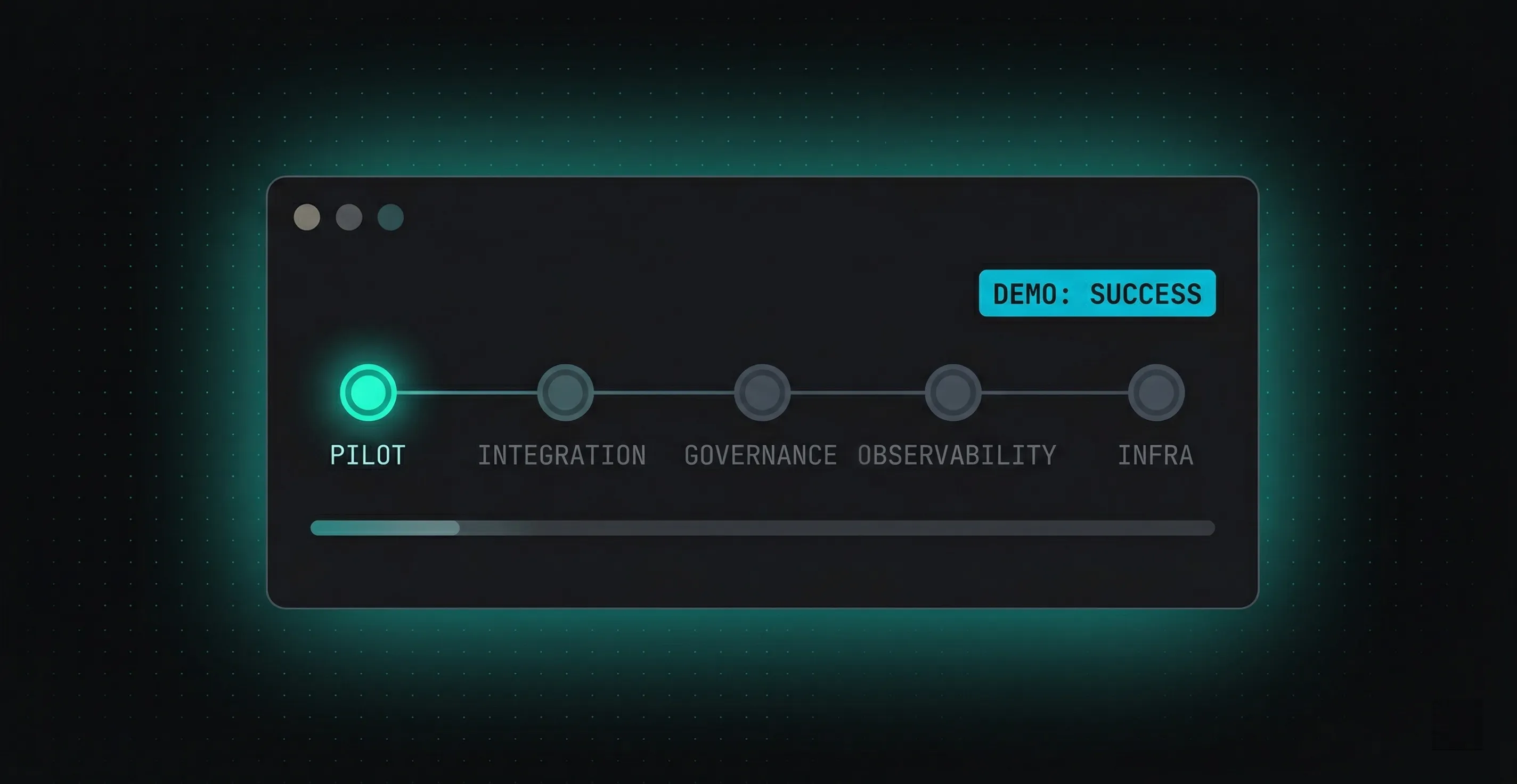

This is the category error that kills most agentic AI initiatives. The pilot-to-production journey is not a scaling exercise. It is a full re-architecture across at least five layers that simply do not exist in the pilot: legacy system integration, governance scaffolding, observability infrastructure, failover design, and infrastructure-as-code. Every one of those layers involves different skills, different tooling, and different design decisions than the ones that made the pilot work. And in most enterprises, every one of those layers belongs to a different team.

The pilot was built by the AI team. The integration layer belongs to the platform team. Governance is owned by risk and compliance. Observability sits with DevOps. Infrastructure-as-code might be a shared services function, or it might be nobody's job at all. The result is that the moment the pilot leaves the sandbox, it enters an organisational structure specifically designed to ensure that no single person or team has accountability for making it work end-to-end.

And that is where things go sideways.

Five layers nobody budgeted for

The gap between a working demo and a production-grade system is not one gap. It is five, and they compound.

Legacy integration is where the dream usually dies first. The pilot was built against a clean API or a curated dataset. Production means connecting to the actual systems: a Salesforce instance that honestly nobody fully understands, a document management platform that was migrated twice and still has orphaned records from 2017, an ERP system that exposes data through a combination of REST endpoints, SOAP services, and in at least one case, a nightly CSV export. The agent that performed beautifully against curated inputs starts producing garbage or timing out when it hits real-world data at real-world latency.

Governance added too late, or not at all. The pattern is almost always the same: build the agent, demonstrate it works, then figure out the governance framework. Which is backwards, because governance constraints should shape the architecture, not get bolted onto it after the fact. An agentic system that makes autonomous decisions needs guardrails baked into its execution path: confidence thresholds that trigger human-in-the-loop escalation, audit trails that capture not just what the agent decided but why, and policy enforcement that operates at execution speed, not at quarterly-review speed. These are architectural decisions that change how the system is built from the ground up.

Observability for agentic systems looks nothing like traditional application monitoring. A standard web application fails with a 500 error or a timeout. An agentic AI system fails in ways that are far harder to detect, because the failure mode is often a plausible-looking wrong answer rather than a system crash. The agent runs at 2am, processes a batch of loan applications, and makes a recommendation based on a hallucinated interpretation of a document it couldn't fully parse. Nothing in your APM dashboard flags this. No alert fires. The error is silent drift, not a thrown exception. It shows up three weeks later in the financials.

Production-grade observability requires distributed trace logging across the full agent chain: every tool call, every retrieval step, every decision branch, every confidence score.

Failover design for non-deterministic systems requires different thinking. When a traditional service fails, you retry the request or fail over to a replica. When an agentic workflow fails mid-chain, three tool calls deep, holding intermediate state, with a partially completed decision, there is no simple retry. Hallucinations don't raise exceptions. They produce plausible-looking outputs that propagate downstream silently. Production agent workflows require durable execution patterns: state checkpoints after each meaningful step, allowing agents to survive restarts without replaying completed work. Almost nobody provisions this during the pilot.

Infrastructure-as-code is the layer that makes everything else reproducible, and it's usually missing entirely. The pilot was deployed manually. Production-grade deployment means staged rollouts with the ability to cut back instantly if metrics deviate, environment parity between staging and production, and the entire infrastructure defined in code, reviewed, versioned, and deployable by anyone on the team without tribal knowledge. This is the difference between a sandbox experiment and a production system.

None of these five layers is individually impossible. But they compound, and in the typical enterprise, each one is owned by a different team with no shared accountability for the overall outcome.

The real problem isn't technology. It's ownership.

Here's where the conversation usually goes wrong. The pilot stalls. The CTO diagnoses it as a resourcing problem: we need more engineers. Or a skills problem: we need people who've done this before. So the organisation hires contractors, or engages a systems integrator, or buys a platform.

And nothing changes. Because the problem was never resourcing or tooling. The problem is that nobody owns the outcome.

Adding headcount into this model doesn't help. It makes it worse. That's a team-shaped hole you're trying to fill with a single person. You need a Platform Engineer, a DevOps specialist, a Compliance Lead, someone who can handle the 2am scenarios. Filling a role not designed to succeed doesn't produce success. It produces another stakeholder in the blame loop.

Five specialists, zero ownership. Each person owns their layer, not the system. Accountability distributed across five teams is accountability that belongs to nobody. What actually ships is built by one team owning all five layers, accountable for the production outcome, not for their individual component working in isolation.

What it actually looks like when someone owns it

A fintech (Series C, growing fast) had built an agentic AI system for loan decisioning. The pilot performance was strong enough that the board approved productionisation. Eight months later, the system was still not live. The AI team and the platform team were locked in a blame loop: the AI team said the integration spec had been met; the platform team said the agent produced non-deterministic outputs that the downstream origination system couldn't handle.

The first counterintuitive decision was not to start building. A two-week paid discovery engagement was proposed specifically because the blame loop between teams indicated an architecture ambiguity that would make any delivery estimate unreliable.

The discovery finding was not what anyone expected. The AI model was not the problem. The pilot had been built assuming synchronous REST calls to the document management system. The production DMS operated on an event-driven queue with variable latency: between 200 milliseconds and 14 seconds depending on document complexity. The agent's timeout logic was written for synchronous response patterns, causing cascading failures on any document above a complexity threshold. This wasn't an AI problem at all. It was a messaging architecture problem upstream of the agent entirely.

The integration layer was redesigned around an async event-driven architecture with explicit state management. Observability infrastructure was provisioned from day one. Governance checkpoints for human-in-the-loop escalation on edge-case credit decisions. The entire system deployed using infrastructure-as-code with staged rollout capability.

Production deployment: 11 weeks from discovery completion. First live loan decision: week 12. Zero unplanned downtime in the first 90 days.

What Zillow should have taught us

The most expensive version of this failure is when the agent actually makes it to production, without the layers.

Zillow's iBuying pricing agent is the canonical case. The system was making autonomous purchasing decisions: real capital deployed based on algorithmic output. When market conditions shifted faster than the model's training data could account for, the agent began systematically overpaying for homes. No observability infrastructure flagged the drift before it showed up in the quarterly results. Zillow shut down the entire division and reported a write-down in the hundreds of millions.

The Air Canada case is the governance version. Their customer-facing AI chatbot fabricated a bereavement fare refund policy. The British Columbia Civil Resolution Tribunal ruled Air Canada fully liable. The precedent was set: if your agent says it, you own it, regardless of whether a human approved the output.

The 40% question

Gartner predicts that 40% of enterprise applications will embed task-specific AI agents by the end of 2026, up from less than 5% in 2025. Against the current production success rates, this projection implies one of two things: either the industry is about to figure out how to close the pilot-to-production gap at unprecedented speed, or we are about to see the most expensive wave of stalled enterprise technology initiatives since the first cloud migration cycle.

The organisations that will ship are the ones that treat the pilot-to-production transition for what it actually is: not a deployment, but a re-architecture requiring unified ownership across integration, governance, observability, failover, and infrastructure. One team. One accountability structure. One definition of "done" that means the system is operating in production under real load and real regulatory scrutiny, not that it passed an internal demo.

Whether yours is in the 40% or the 60% comes down to one question that has nothing to do with your model, your data, or your AI team's capability: who actually owns the delivery?

Vanrho takes agentic AI pilots from demo to production under single-team ownership. Integration, governance, observability, infrastructure. If your pilot is stalled between teams, let's talk about what's actually blocking it.